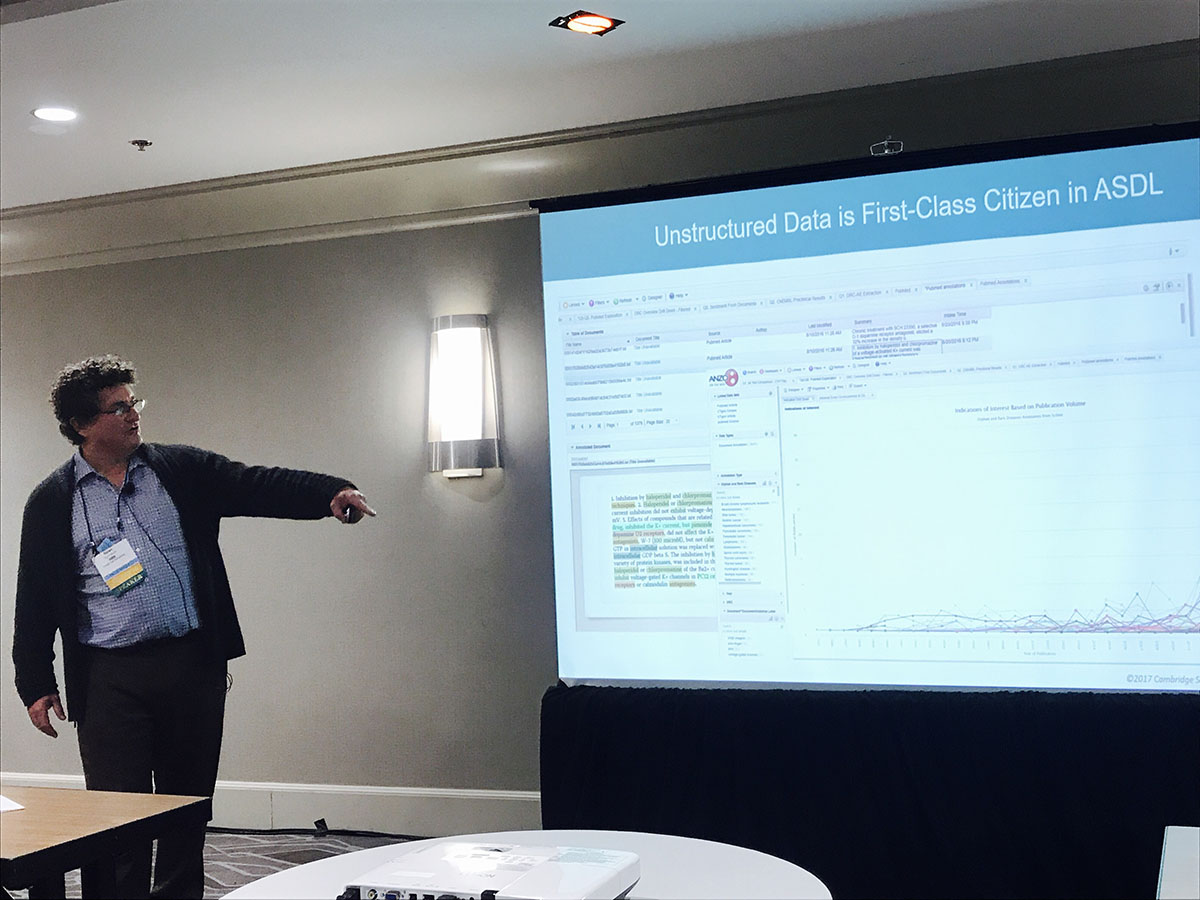

Regardless of the use case for which it is deployed, the Internet of Things is largely based on machine generated data. Technologies involving sensor data, data streaming, and uninterrupted data...

1 Beacon St | 15th Floor | Boston, MA 02108

Copyright © 2021 Cambridge Semantics. All Rights Reserved.

Privacy Policy