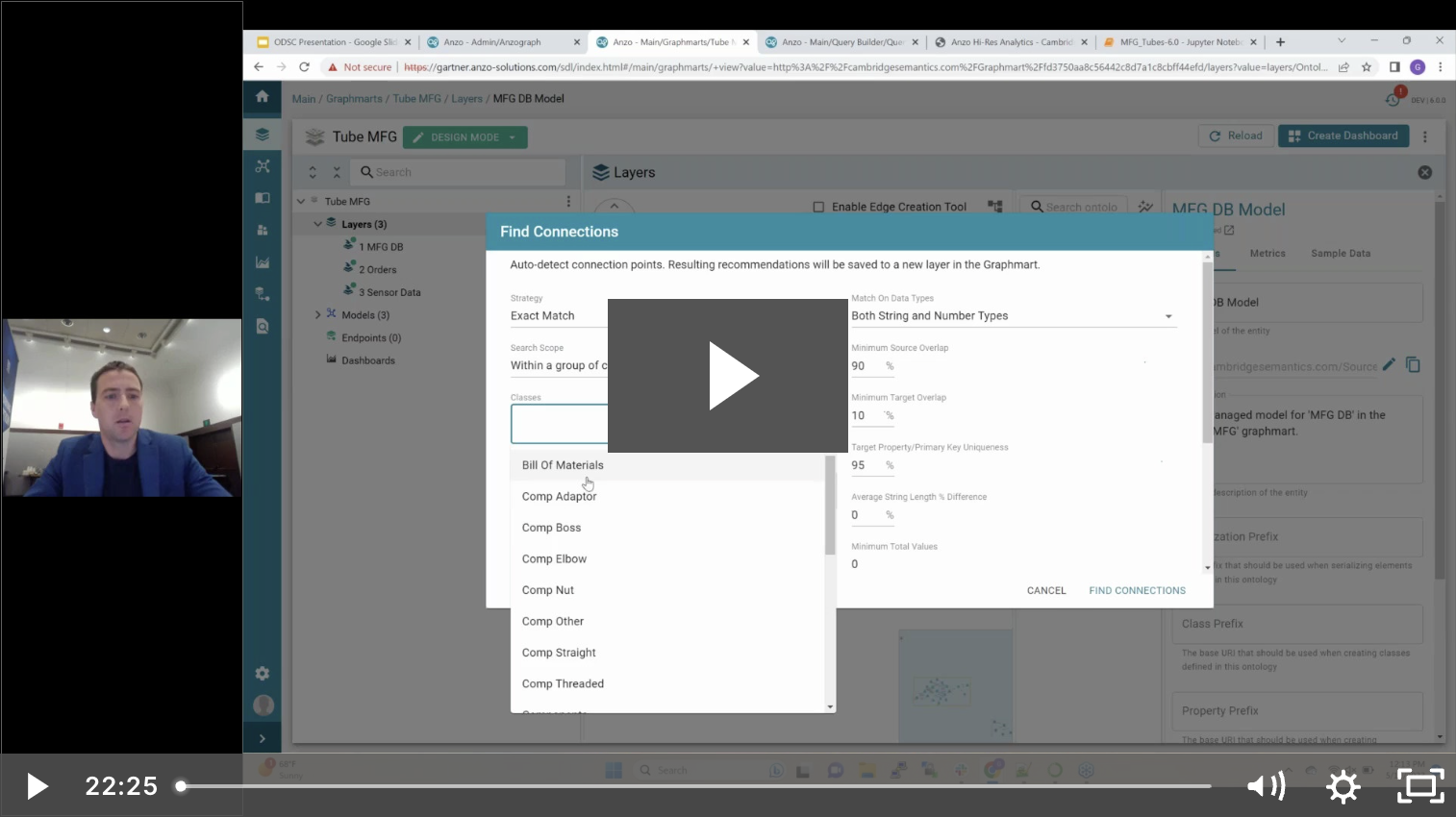

At Cambridge Semantics, we have watched the explosion of AI awareness in the last year with interest. Nearly every tech leader considering our knowledge graph platform, Anzo, is also actively...

1 Beacon St | 15th Floor | Boston, MA 02108

Copyright © 2021 Cambridge Semantics. All Rights Reserved.

Privacy Policy